Note

This tutorial was generated from an IPython notebook that can be downloaded here.

Gaussian process models for stellar variability¶

When fitting exoplanets, we also need to fit for the stellar variability and Gaussian Processes (GPs) are often a good descriptive model for this variation. PyMC3 has support for all sorts of general GP models, but exoplanet includes support for scalable 1D GPs (see Scalable Gaussian processes in PyMC3 for more info) that can work with large datasets. In this tutorial, we go through the process of modeling the light curve of a rotating star observed by Kepler using exoplanet.

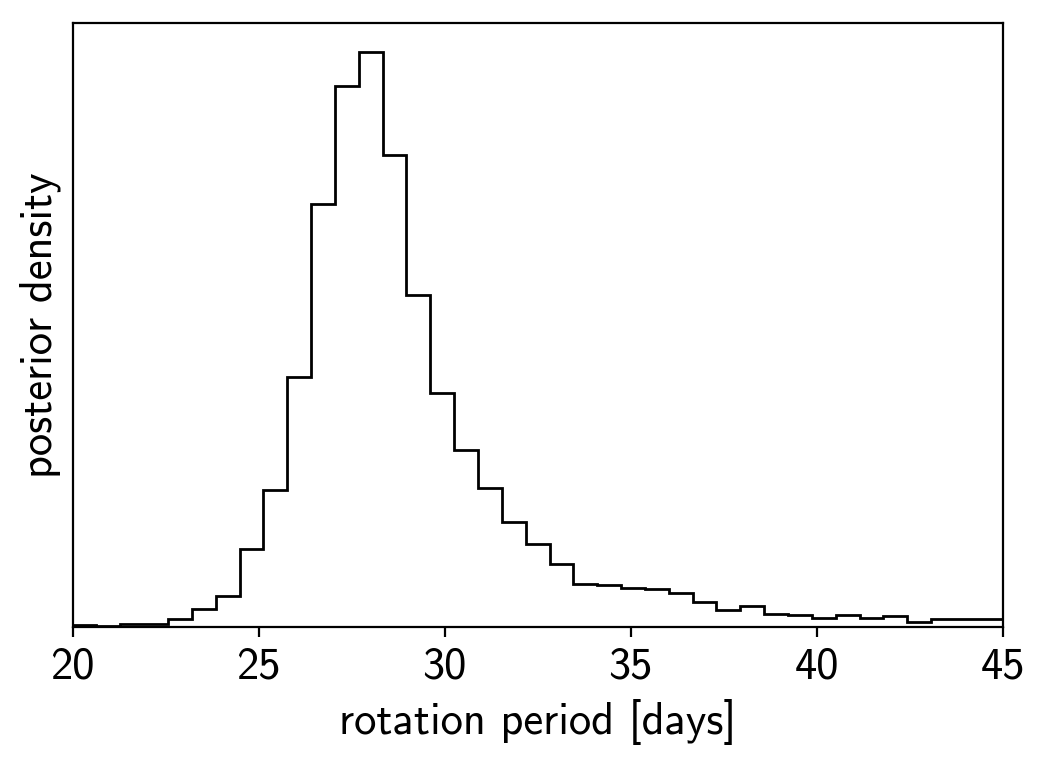

First, let’s download and plot the data:

import numpy as np

import matplotlib.pyplot as plt

from astropy.io import fits

url = "https://archive.stsci.edu/missions/kepler/lightcurves/0058/005809890/kplr005809890-2012179063303_llc.fits"

with fits.open(url) as hdus:

data = hdus[1].data

x = data["TIME"]

y = data["PDCSAP_FLUX"]

yerr = data["PDCSAP_FLUX_ERR"]

m = (data["SAP_QUALITY"] == 0) & np.isfinite(x) & np.isfinite(y)

x = np.ascontiguousarray(x[m], dtype=np.float64)

y = np.ascontiguousarray(y[m], dtype=np.float64)

yerr = np.ascontiguousarray(yerr[m], dtype=np.float64)

mu = np.mean(y)

y = (y / mu - 1) * 1e3

yerr = yerr * 1e3 / mu

plt.plot(x, y, "k")

plt.xlim(x.min(), x.max())

plt.xlabel("time [days]")

plt.ylabel("relative flux [ppt]")

plt.title("KIC 5809890");

A Gaussian process model for stellar variability¶

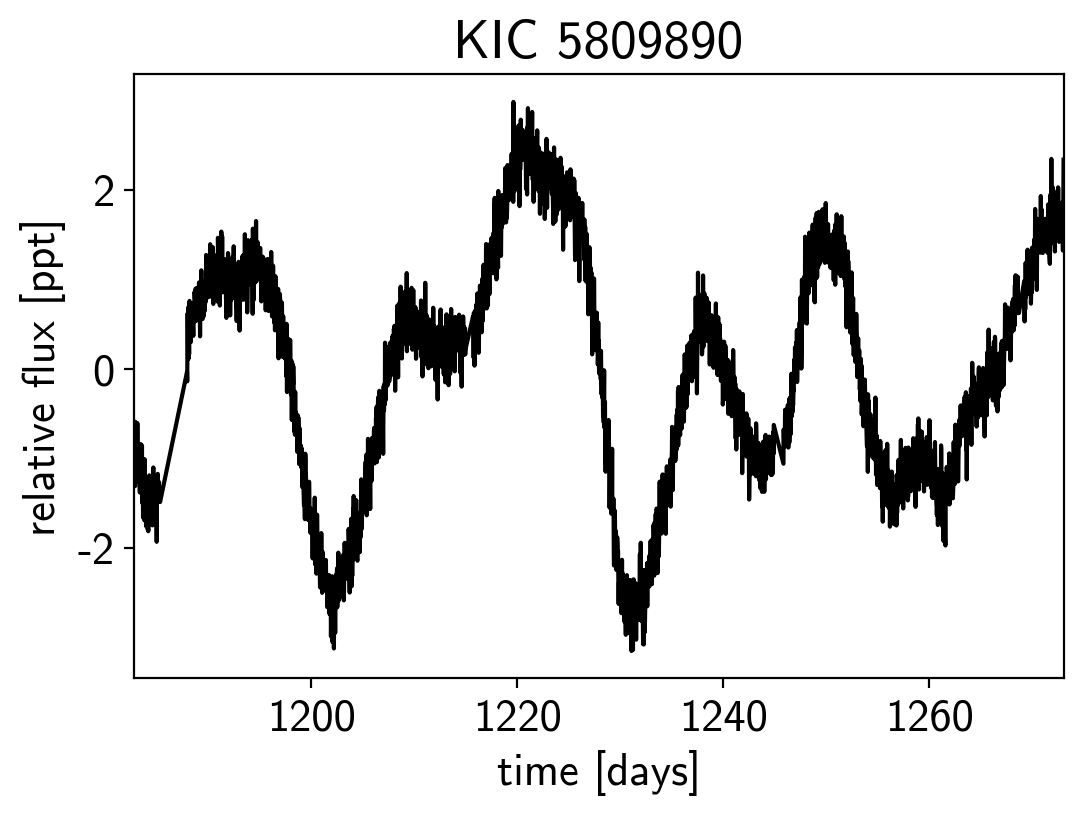

This looks like the light curve of a rotating star, and it has been shown that it is possible to model this variability by using a quasiperiodic Gaussian process. To start with, let’s get an estimate of the rotation period using the Lomb-Scargle periodogram:

import exoplanet as xo

results = xo.estimators.lomb_scargle_estimator(

x, y, max_peaks=1, min_period=5.0, max_period=100.0,

samples_per_peak=50)

peak = results["peaks"][0]

freq, power = results["periodogram"]

plt.plot(-np.log10(freq), power, "k")

plt.axvline(np.log10(peak["period"]), color="k", lw=4, alpha=0.3)

plt.xlim((-np.log10(freq)).min(), (-np.log10(freq)).max())

plt.yticks([])

plt.xlabel("log10(period)")

plt.ylabel("power");

Now, using this initialization, we can set up the GP model in

exoplanet. We’ll use the exoplanet.gp.terms.RotationTerm

kernel that is a mixture of two simple harmonic oscillators with periods

separated by a factor of two. As you can see from the periodogram above,

this might be a good model for this light curve and I’ve found that it

works well in many cases.

import pymc3 as pm

import theano.tensor as tt

with pm.Model() as model:

# The mean flux of the time series

mean = pm.Normal("mean", mu=0.0, sd=10.0)

# A jitter term describing excess white noise

logs2 = pm.Normal("logs2", mu=2*np.log(np.min(yerr)), sd=5.0)

# The parameters of the RotationTerm kernel

logamp = pm.Normal("logamp", mu=np.log(np.var(y)), sd=5.0)

logperiod = pm.Normal("logperiod", mu=np.log(peak["period"]), sd=5.0)

logQ0 = pm.Normal("logQ0", mu=1.0, sd=10.0)

logdeltaQ = pm.Normal("logdeltaQ", mu=2.0, sd=10.0)

mix = pm.Uniform("mix", lower=0, upper=1.0)

# Track the period as a deterministic

period = pm.Deterministic("period", tt.exp(logperiod))

# Set up the Gaussian Process model

kernel = xo.gp.terms.RotationTerm(

log_amp=logamp,

period=period,

log_Q0=logQ0,

log_deltaQ=logdeltaQ,

mix=mix

)

gp = xo.gp.GP(kernel, x, yerr**2 + tt.exp(logs2), J=4)

# Compute the Gaussian Process likelihood and add it into the

# the PyMC3 model as a "potential"

pm.Potential("loglike", gp.log_likelihood(y - mean))

# Compute the mean model prediction for plotting purposes

pm.Deterministic("pred", gp.predict())

# Optimize to find the maximum a posteriori parameters

map_soln = pm.find_MAP(start=model.test_point)

logp = 694.35, ||grad|| = 0.014649: 100%|██████████| 23/23 [00:00<00:00, 238.27it/s]

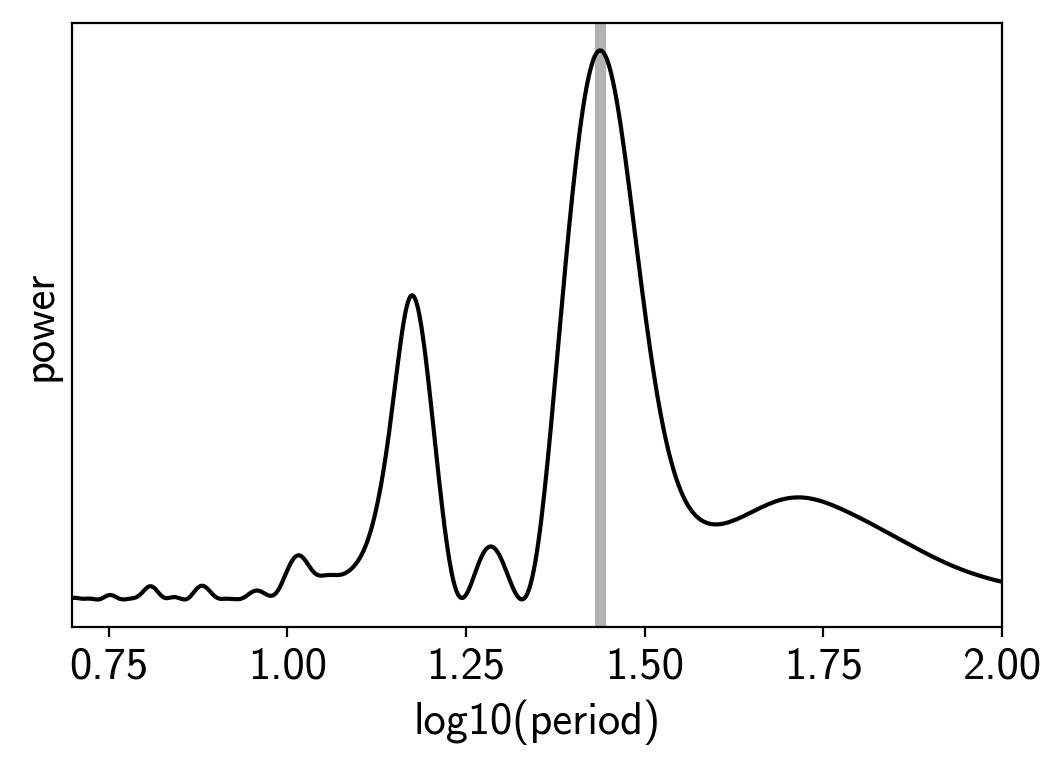

Now that we have the model set up, let’s plot the maximum a posteriori model prediction.

plt.plot(x, y, "k", label="data")

plt.plot(x, map_soln["pred"], color="C1", label="model")

plt.xlim(x.min(), x.max())

plt.legend(fontsize=10)

plt.xlabel("time [days]")

plt.ylabel("relative flux [ppt]")

plt.title("KIC 5809890; map model");

That looks pretty good! Now let’s sample from the posterior using a

exoplanet.PyMC3Sampler.

np.random.seed(42)

sampler = xo.PyMC3Sampler()

with model:

sampler.tune(tune=2000, start=map_soln, step_kwargs=dict(target_accept=0.9))

trace = sampler.sample(draws=2000)

Sampling 4 chains: 100%|██████████| 308/308 [00:24<00:00, 2.61draws/s] Sampling 4 chains: 100%|██████████| 108/108 [00:10<00:00, 9.86draws/s] Sampling 4 chains: 100%|██████████| 208/208 [00:04<00:00, 50.18draws/s] Sampling 4 chains: 100%|██████████| 408/408 [00:06<00:00, 65.17draws/s] Sampling 4 chains: 100%|██████████| 808/808 [00:12<00:00, 64.76draws/s] Sampling 4 chains: 100%|██████████| 1608/1608 [00:21<00:00, 76.45draws/s] Sampling 4 chains: 100%|██████████| 4608/4608 [01:11<00:00, 64.33draws/s] Multiprocess sampling (4 chains in 4 jobs) NUTS: [mix, logdeltaQ, logQ0, logperiod, logamp, logs2, mean] Sampling 4 chains: 100%|██████████| 8200/8200 [02:44<00:00, 49.92draws/s] There was 1 divergence after tuning. Increase target_accept or reparameterize. The acceptance probability does not match the target. It is 0.9722822521454982, but should be close to 0.9. Try to increase the number of tuning steps.

Now we can do the usual convergence checks:

pm.summary(trace, varnames=["mix", "logdeltaQ", "logQ0", "logperiod", "logamp", "logs2", "mean"])

| mean | sd | mc_error | hpd_2.5 | hpd_97.5 | n_eff | Rhat | |

|---|---|---|---|---|---|---|---|

| mix | 0.612192 | 0.246607 | 0.004753 | 0.184111 | 0.999968 | 2390.827873 | 1.001618 |

| logdeltaQ | 1.258932 | 9.498497 | 0.154109 | -16.370452 | 21.533913 | 3799.856881 | 0.999809 |

| logQ0 | 0.634067 | 0.577331 | 0.009861 | -0.482119 | 1.838512 | 3253.935654 | 1.000323 |

| logperiod | 3.367242 | 0.121002 | 0.002092 | 3.189152 | 3.651945 | 3173.838509 | 1.000147 |

| logamp | -0.085608 | 0.572374 | 0.010271 | -1.113512 | 1.038443 | 2806.723951 | 1.000188 |

| logs2 | -4.968817 | 0.121865 | 0.001696 | -5.212865 | -4.737115 | 5660.208712 | 0.999860 |

| mean | -0.017287 | 0.216807 | 0.003465 | -0.426309 | 0.413271 | 3592.780697 | 0.999810 |

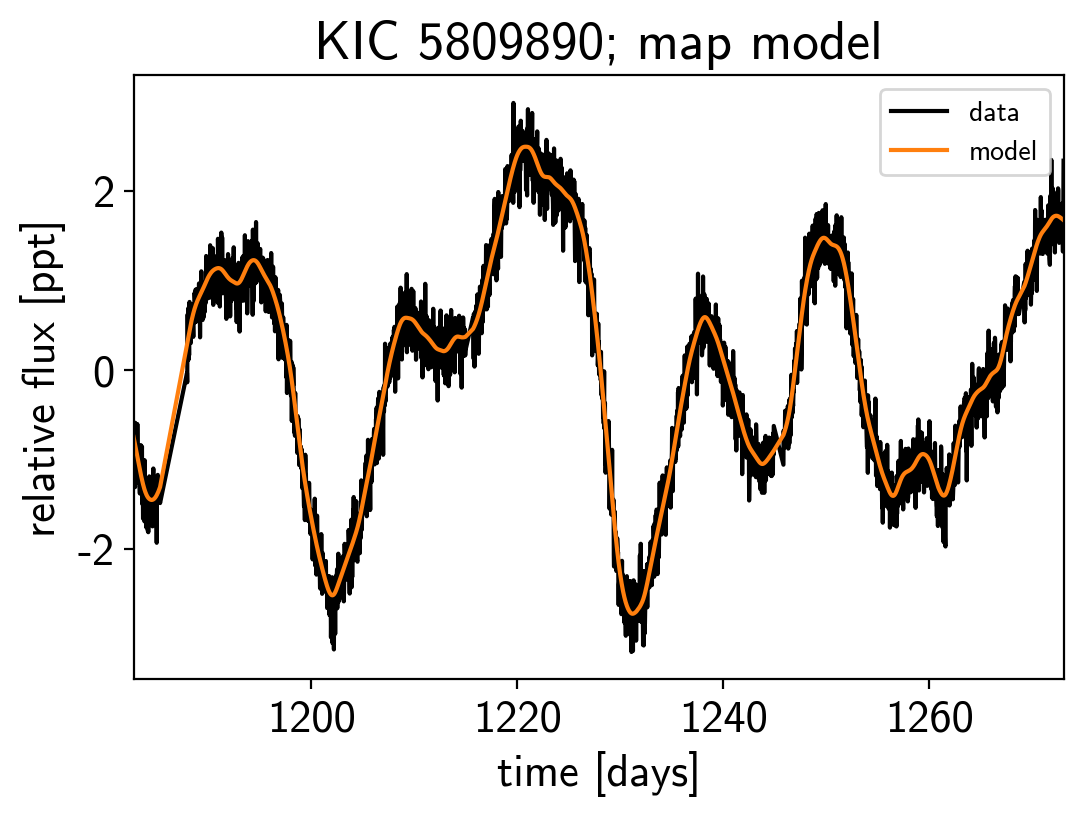

And plot the posterior distribution over rotation period:

period_samples = trace["period"]

bins = np.linspace(20, 45, 40)

plt.hist(period_samples, bins, histtype="step", color="k")

plt.yticks([])

plt.xlim(bins.min(), bins.max())

plt.xlabel("rotation period [days]")

plt.ylabel("posterior density");